Serverless Architecture

As an engineer at CrossComm I have the opportunity to work on a range of backend projects using a multitude of technology stacks. I've written services with Java frameworks - such as Spring, Jersey, rest.li and Play. I've also written services using Node.js frameworks - such as StrongLoop and Express.js. These frameworks have proven reliable, efficient and useful for our customers; however, solutions based on these frameworks are also complex to develop and need ongoing management to ensure they run on the latest hardware and software.

In response to this complexity, CrossComm has been increasingly recommending a backend approach that is better for both our developers and our clients: serverless microservice-based architecture. For our developers, this relatively new architecture enables us to build better backend solutions quickly- and for our customers, it offers lower development costs, allows for granular performance scaling, and removes the ongoing headache of maintaining a backend.

Enter Serverless

The motivation behind the “serverless” approach has been to eliminate the concerns of the underlying server (e.g. hardware/OS) from the implementing and scaling of a backend, so that developers can stay focused on code rather than server management. And while serverless and microservices have recently become hot topics, the industry has actually been moving towards abstraction of the server for decades. I remember writing my first server in the early '90s; at the time, there were two types of servers for application development: a web server and a database. The lucky and hot startups actually had two physical machines. For most, we relied on separating the functionality. The application service handled business logic while the database handled the raw data. The typical design would be application code calling stored procedures on the database. This decoupled the services and allowed deployment, development and management to happen independently. You could say this was microservice design with two services- the separation was an improvement, but more could be done.

The .com era came in part due to Java EE and its improvements in server architecture. Multiple JVMs allowed code to be distributed among available server resources. Adding in load balancers, cache servers and database pools to the infrastructure meant more management. It also meant more opportunities to separate functionality across the backend. Java applications could communicate across the network allowing for independent services. Instead of a single application server for all logic, you could build independent services. A modern portal site such Yahoo could separate its services for calendar from those for contacts and those for email. This was approaching the microservice design philosophy with multiple services but not necessarily multiple servers.

Looking at more recent times, the arrival of Amazon Web Services, Microsoft Azure, and Google Cloud have allowed developers to create and deploy backends that scale across multiple hardware servers upon demand. In addition, the emergence of Backend-as-a-Service (BaaS) platforms such as Parse also enabled fast prototyping and development while removing the daily management of servers. Parse- a popular BaaS- enabled mobile developers to build entire backend services with little effort. It also ended as a warning for relying too much on these services; when Parse announced its shutdown, companies including CrossComm and it's customers faced new development efforts and timelines.

But it was the advent of Function-as-a-Service (FaaS) implementations such as Amazon Lambda and Google Cloud Functions that now allow us to attain scaling on a per-function basis- and it is this latest incarnation of “serverless” that we focus on when addressing the full benefits of serverless microservices.

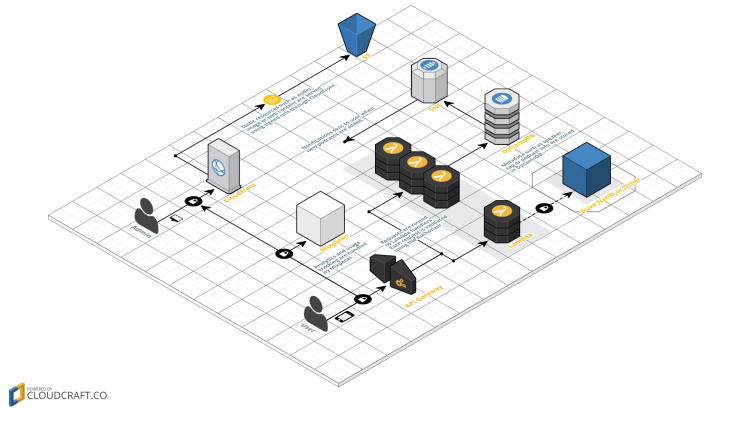

Using a FaaS like Lambda, we now build our backends as a collection of lightweight services for handling specific tasks and data types- each driven by modular, stateless, and event-triggered code functions that are limited in complexity and finite in logic. The benefits to our clients are enormous: with modular functions, these microservices are easier to test, easier to maintain, and easier to build upon in future phases. Because each microservice can be deployed independently of each other, re-deployment of the entire backend is a thing of the past. Equally important, each service can scale independently based on demand; instead of firing up new server instances of your backend server for scaling, we have truly entered an era where we can pay for “compute time” for the use of services instead of “server time” for additional server instances.

The bottom line for our clients: the serverless microservices approach leads to:

Faster and safer deployment of backend features Less costly initial and ongoing development costs Easier and more granular performance scalability

It's no surprise that some of the largest sites in the world today rely on this separation of logic. And serverless architecture is good for us developers as well; coding is more rewarding and fun when we can focus on writing elegant code that solves specific objectives instead of getting bogged down in bloated codebases, server administration and scalability management.

Serverless Magic

The term, “serverless” is a bit of a misnomer, as it suggests that the server has magically disappeared from the equation. In fact, even serverless solutions need to be powered by a server farm somewhere. But FaaS implementations like Amazon Lambda are a natural realization of the historical trend towards increasing backend functionality and scalability while decreasing complexity and costs. With serverless architecture, engineers can finally build robust, scalable backend functionality without concern for the servers themselves. And even for a backend developer veteran who has seen this coming, it is still something akin to magic.

In my next post, I’ll outline how we used this architecture to build a robust, reliable and scalable system for one of our customers.